Make AI Hiring Workflows Safer

Recruiting AI Is Moving Too Fast for Many Teams

AI recruiting tools are gaining access to ATS records, recruiter communication, candidate data, calendars, and internal documentation.

Many teams are focused on speed. The question is whether the workflow is explainable, reviewable, and controllable once AI starts influencing hiring decisions.

Make your AI workflows safer before you make them faster.

2-Minute Skim

3 things to know

AI agents are moving toward controlled execution: plans before action, scoped permissions, approvals, sandboxing, and audit logs.

Verifiable retrieval is becoming the minimum standard. If an AI workflow cannot cite the exact source, page, file, or policy, it shouldn’t influence hiring decisions.

Voice AI is improving, but recruiting teams should limit usage to scheduling, candidate support, transcription, and note capture before using it in evaluative workflows.

2 things to test

Build a 45-minute permission map for one recruiting workflow.

Create a cited intake-to-scorecard assistant that links every role requirement back to approved source material.

1 thing to ignore

Vendor claims that AI interviewing is suddenly “ready” because voice quality improved. Better speech does not solve fairness, validity, disclosure, candidate trust, or accountability.

Executive Brief

The AI industry is shifting from capability toward operational control.

OpenAI published how it safely runs Codex using sandboxing, approvals, network policy, identity controls, and agent telemetry. Notion introduced Plan Mode so agents ask clarifying questions before making large changes. Google upgraded Gemini File Search with multimodal retrieval and page-level citations. GitHub expanded organization-level secret controls and pre-commit scanning for AI agents. OpenAI also released stronger realtime voice and transcription models.

Many recruiting teams will interpret these releases as permission to automate more work. AI deployment requires more structure, not less. The teams that scale AI safely will be the ones that can explain exactly how the automation works, what data it touched, what evidence it used, and where human judgment still matters.

What Matters This Week

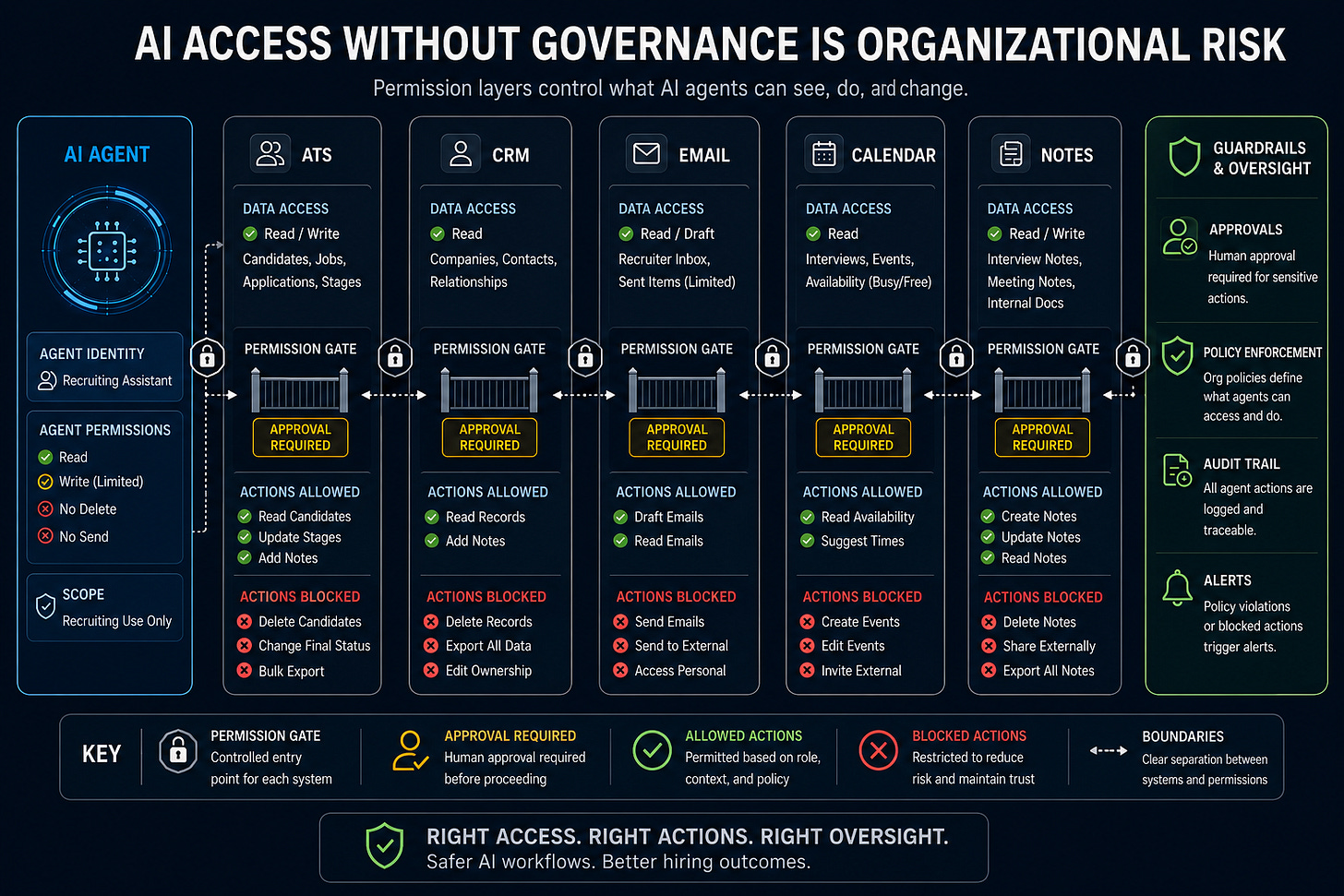

1. Agent safety is becoming an operating model

OpenAI’s Codex safety write-up is one of the clearest operational AI documents. The framework includes sandbox boundaries, approval rules, managed network access, identity controls, command restrictions, and telemetry. Recruiting teams should think about AI systems the same way.

Recruiting use case

Map one AI-assisted sourcing or screening workflow by:

allowed data

allowed actions

blocked actions

approval points

audit trail

👉 Takeaway: Don’t expand agent usage until you can explain:

what the agent can access

what it can do

what it can’t do

where human review happens

Source: OpenAI, “Running Codex safely at OpenAI”

2. Cited retrieval is now the minimum bar for recruiting knowledge work

Google’s Gemini API File Search now supports multimodal retrieval, metadata filtering, and page-level citations. That matters because recruiting workflows can depend on AI summarization for:

intake notes

interview feedback

scorecards

candidate summaries

policy interpretation

hiring manager requests

Without citations, recruiting teams create confident ambiguity.

Recruiting use case

Build a role-intake assistant that extracts:

requirements

competencies

selling points

interview criteria

...while linking every output back to the original intake note, hiring-manager quote, or approved job document.

👉 Takeaway: Require citations for AI-generated claims involving:

role criteria

candidate evidence

policy interpretation

hiring-manager feedback

If the workflow can’t explain where information came from, it shouldn’t influence hiring decisions.

Source: Google, “Gemini API File Search is now multimodal”

3. Agents should ask questions before touching important work

Notion’s new Plan Mode has agents pause, ask clarifying questions, and create a plan before making changes. Recruiting teams need the same pattern before AI systems:

rewrite scorecards

modify candidate summaries

edit outreach

update workflows

touch CRM or ATS data

Recruiting use case

Add a required “plan first” step before AI workflows:

summarize the objective

list assumptions

identify source material

explain proposed changes

highlight risks

require approval

👉 Takeaway: Execution speed without alignment can snowball into operational debt. Fast wrong is still wrong.

Source: Notion, “Plan Mode”

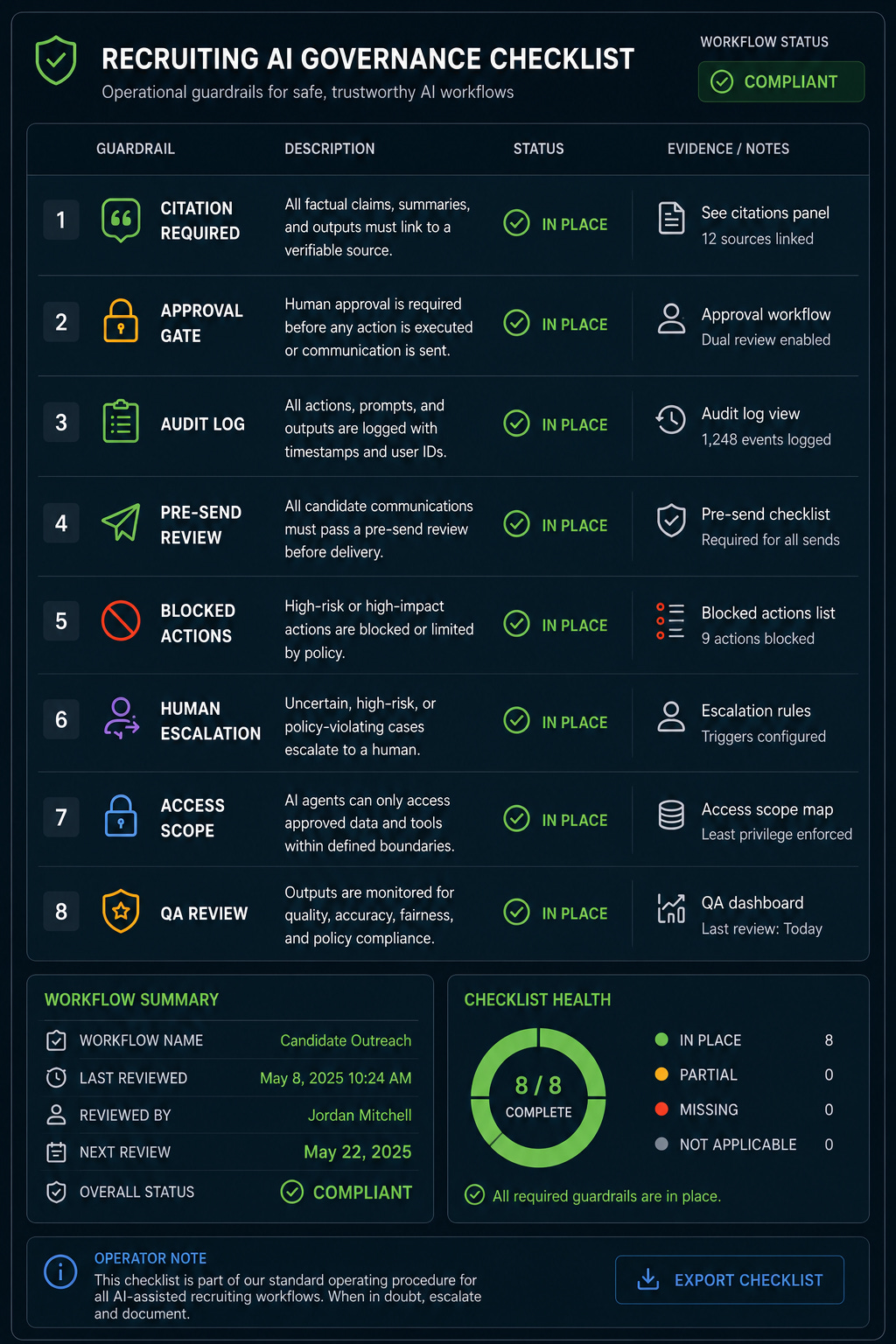

Playbook: Build an Agent Permission Map

Goal

Create a one-page control map for one recruiting AI workflow before expanding usage.

Setup

Pick one workflow that uses AI today or will be tested this month: sourcing research, outreach drafting, interview packet prep, candidate FAQ support, or hiring-manager update drafts.

Name a workflow owner, reviewer, and escalation contact. Define whether the workflow touches candidate data, employee data, compensation, interview notes, assessment data, or protected information.

Steps

Write the workflow objective in one sentence.

List every input the AI receives.

List every system the AI or user touches during the workflow.

Mark each action as allowed, approval-required, or blocked.

Define required evidence in the output: citation, source link, page number, candidate-provided fact, or recruiter note.

Add a pre-send review checklist for sensitive outputs.

Decide what gets logged: prompt, inputs, output, reviewer, changes made, final status.

Run the workflow on 3 examples and capture failure patterns.

Tighten permissions or prompts before expanding to the team.

Prompt

You are helping create a permission map for a recruiting AI workflow.

Workflow:

[describe workflow]

Systems involved:

[ATS, CRM, email, calendar, assessment tool, HRIS, docs, spreadsheets]

Data involved:

[candidate profiles, resumes, notes, compensation, interview feedback, job description]

Create a table with:

- Step

- Data touched

- System touched

- AI action

- Risk level

- Allowed / approval-required / blocked

- Required citation or evidence

- Human reviewer

- Log required

Rules:

- Do not allow the AI to make hiring decisions.

- Do not allow protected-class inference.

- Flag any action that sends, edits, deletes, ranks, rejects, or updates candidate records.

- Prefer tighter permissions where the risk is unclear.

Common mistakes

Mapping the tool but not the data it touches.

Treating “draft only” as safe without checking what the draft includes.

Letting agents use personal logins without access review.

Forgetting calendar, email, and notes systems because they feel routine.

Measuring time saved before measuring error rate and rework.

When NOT to use this

Do not use it to justify AI workflows that already violate policy.

Do not use it for final selection or rejection decisions.

Do not use it when no one can review the output.

Do not use it with sensitive data in tools not approved for that data.

What good looks like

Every AI action has a permission status.

Every sensitive output has a human review step.

Every factual claim has a source.

The workflow has named owners, not vague accountability.

The team can explain what AI did and what a human approved.

Prompt Chain: Cited Role Intake to Scorecard

Use case

Turn intake notes and job documents into a recruiter-reviewed scorecard with citations.

System prompt

You are a recruiting operations assistant. Your job is to structure role information, not make hiring decisions.

Rules:

- Cite the source for every requirement.

- Separate must-have criteria from nice-to-have preferences.

- Flag vague, biased, illegal, or non-evidence-based criteria.

- Do not infer requirements that are not present in the source material.

- Do not create candidate rankings or rejection logic.

- Produce recruiter-reviewable output.

User prompt 1: Extract requirements

Review the role materials below.

Materials:

[paste intake notes, job description, hiring manager notes, interview plan]

Extract:

- Must-have requirements

- Nice-to-have preferences

- Responsibilities

- Selling points

- Open questions

- Risky or vague criteria

For each item, include the exact source quote or source reference.

User prompt 2: Build scorecard draft

Using only the extracted requirements, draft a structured scorecard.

Include:

- Competency

- What strong evidence looks like

- What weak evidence looks like

- Suggested interview question

- Source citation

- Reviewer notes field

Flag any competency that lacks clear source evidence.

User prompt 3: Challenge the scorecard

Audit this scorecard before it is used.

Look for:

- Criteria that are too broad

- Criteria that invite bias

- Requirements that are preferences disguised as must-haves

- Missing evidence standards

- Unsupported assumptions

- Candidate experience risks

Return a short list of fixes and a revised version.

When this breaks

Intake notes are too vague.

The hiring manager has not agreed on must-haves.

The AI invents criteria to fill gaps.

The team treats the scorecard as final without human calibration.

The source documents contain biased or exclusionary language.

Fast Wins

Add “cite the source” requirements to your recruiting AI prompts.

Create a blocked-actions list:

reject candidates

rank final slates

infer protected traits

update ATS records

send candidate emails without approval

Add a pre-send review step for AI-generated recruiter communication.

Convert one intake into a cited scorecard draft and bring unresolved questions back to the hiring manager.

Ask IT which AI tools are currently approved for candidate data.

Strategic Experiments

Cited Intake Assistant

Hypothesis: Cited intake summaries reduce hiring-manager rework and improve scorecard clarity.

Test: Run 5 roles through the prompt chain and compare against current intake docs.

Measure: Missing criteria, hiring-manager edits, time to finalized scorecard, recruiter confidence.

Non-Evaluative Voice Agent

Hypothesis: Voice AI can reduce recruiter admin without damaging candidate trust if it avoids evaluation.

Test: Use voice for candidate FAQs, scheduling status, event support, or recruiter dictation.

Measure: Completion rate, candidate satisfaction, escalation rate, errors, disclosure clarity.

AI Workflow Control Map

Hypothesis: A permission map reduces security friction and prevents risky AI expansion.

Test: Map one workflow and review it with TA ops, legal, and IT.

Measure: Number of blocked actions found, approval gaps, data risks, time to approve pilot.

Recruiting teams are learning that every AI workflow will eventually need:

traceability

approval logic

evidence standards

permission controls

human accountability

The organizations that adapt early will have the advantage. If you’re building recruiting systems that need to scale without losing control, subscribe below.