The Next AI Recruiting Upgrade Is Operational Discipline

How to operationalize AI in recruiting with work queues, evaluation, and human review.

AI didn’t break recruiting. Lack of operational discipline did.

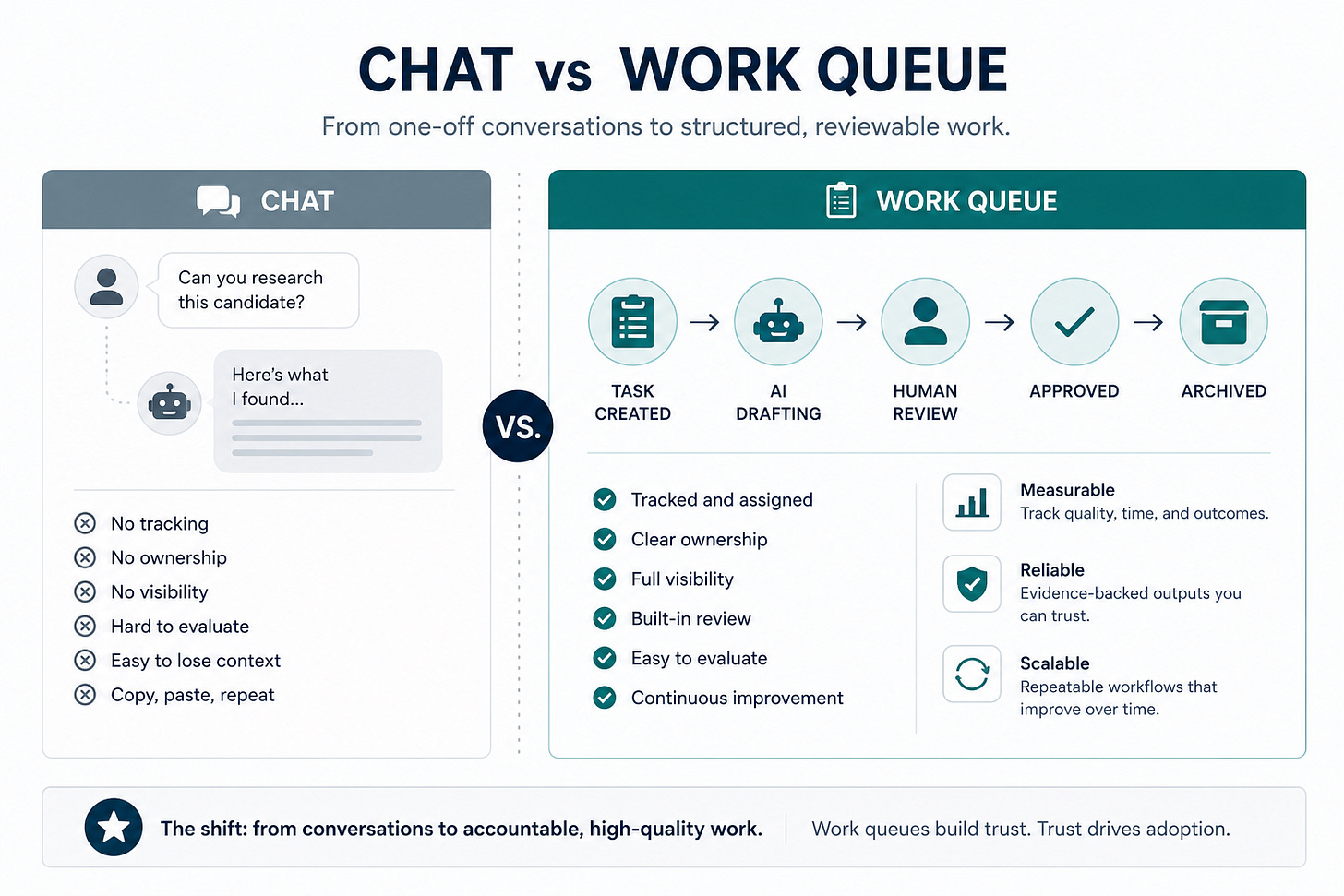

Most teams use AI like a side conversation: paste something in, get something back, move on. That works. It doesn’t scale.

AI work is becoming managed work. Assigned. Tracked. Evaluated. Reviewed.

That shift matters because recruiting needs cleaner execution:

Cleaner intake packets

Stronger sourcing research

Clearer hiring-manager updates

Evidence-backed summaries

Fewer unsupported claims

Don’t chase autonomous recruiting. Build a disciplined recruiting workbench first.

2-Minute Skim

3 things to know

AI is moving from chat sessions to managed work queues

Output is easy. Trust and evaluation are hard

Treat AI like junior operational capacity, not a decision-maker

2 things to test

Build a one-role recruiting workbench with tracked AI tasks

Create an evaluation set before changing models or prompts

1 thing to ignore

Claims that autonomous agents can run recruiting end-to-end

Executive Brief

What changed this week

Agent infrastructure is getting real

Control planes are emerging

Evaluation is becoming the bottleneck

File generation is closing the loop into real work

What teams get wrong

They learn about agents and jump to autonomy. That’s backwards.

What to do instead

Design tighter work:

Specific tasks

Known inputs

Defined outputs

Human review

Evaluation loop

Start with one role. Expand only after it beats your current process.

If you can’t evaluate the workflow, you’re not improving it.

What Matters Most This Week

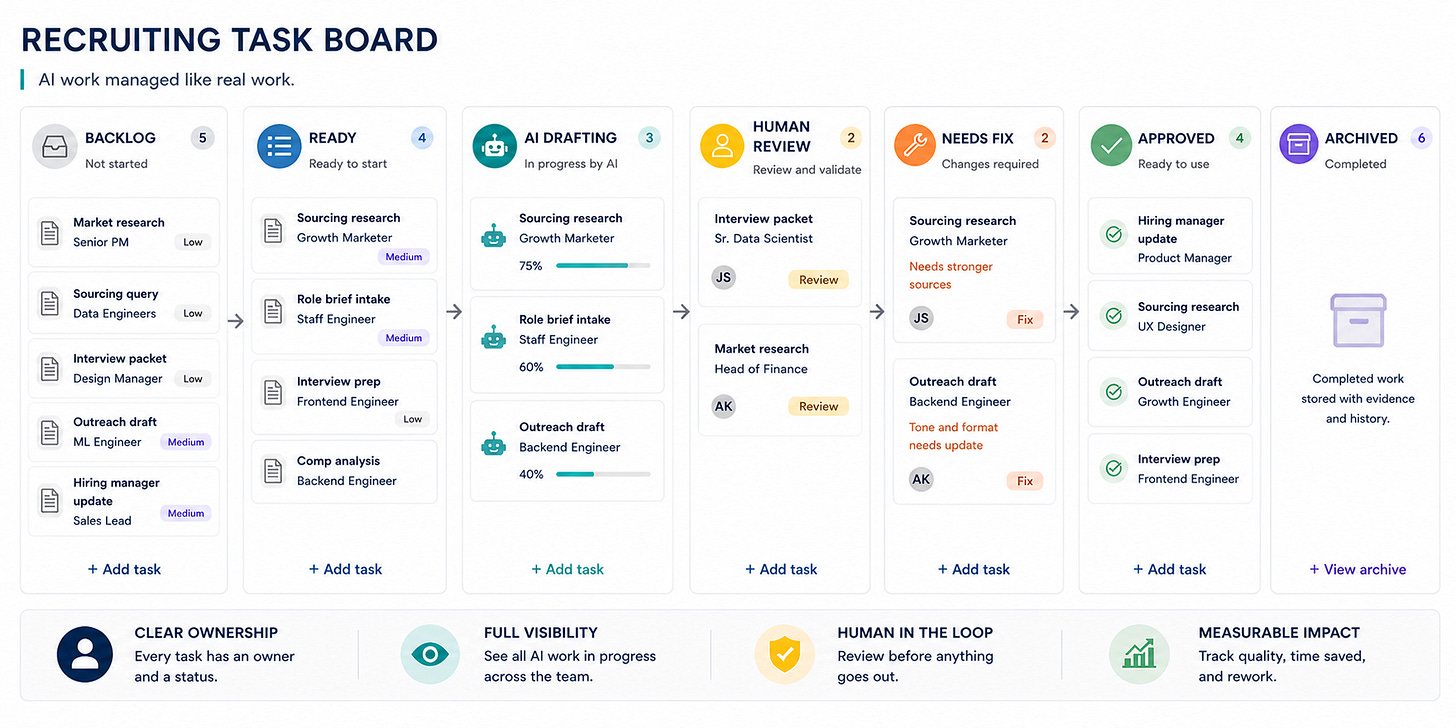

1. Agent work needs a task board

OpenAI’s Symphony reframes agents as work units on a board. Tasks get assigned. Agents execute. Humans review.

Recruiting translation:

Run sourcing research, outreach drafts, interview prep, and hiring-manager updates as tracked tasks.

The future isn’t “AI assistants.” It’s work queues with receipts.

👉 Takeaway: Treat AI work like tracked work, not prompts.

Source: OpenAI, “An open-source spec for Codex orchestration: Symphony.”

2. Agent sprawl is a governance problem

Microsoft Agent 365 signals what’s coming: centralized control, observability, and security across tools.

Unmanaged AI in recruiting isn’t scrappy. It’s untracked candidate data movement.

👉 Takeaway: If you can’t see it, you can’t control it.

Source: Microsoft Security Blog, “Microsoft Agent 365, now generally available, expands capabilities and integrations.”

3. Evaluation is becoming the bottleneck

Hugging Face shows agent evals are expensive and noisy.

Without a fixed test set, you’re guessing.

👉 Takeaway: If you can’t evaluate the workflow, you’re not improving it.

Source: Hugging Face, “AI evals are becoming the new compute bottleneck.”

4. Model swaps break performance

Same agent. Different model. Different outcome.

LangChain shows 10–20 point swings depending on model tuning.

👉 Takeaway: Treat model upgrades like workflow changes.

Source: LangChain, “Tuning Deep Agents to Work Well with Different Models.”

5. File generation is real leverage

Gemini can now generate structured artifacts directly.

Less copy/paste. More usable output.

This is where recruiting ops wins time.

👉 Takeaway: The biggest gains look boring - and they compound.

Source: Google, “You can now easily generate files in Gemini.”

6. Document agents must preserve evidence

Agents are getting better at handling real files.

That’s useful only if they preserve traceability.

The moment an agent gives a verdict instead of evidence, it has crossed the line.

👉 Takeaway: No evidence, no trust.

Source: LlamaIndex, “LlamaParse MCP: Agentic OCR tools for your AI agents.”

7. Persistent agents change the workflow

Mistral’s remote agents show the pattern:

Long-running work. Visible actions. Approval gates.

👉 Takeaway: If it can’t show its work, it shouldn’t touch recruiting.

Source: Mistral AI, “Remote agents in Vibe. Powered by Mistral Medium 3.5.”

Playbook: Build a Recruiting Agent Workbench

This is what operational AI in recruiting actually looks like.

Goal:

Turn AI from scattered prompts into a managed workflow for one role.

Setup

Pick one active role with real volume

Define 5 AI-supported tasks:

Market research

Sourcing queries

Outreach drafts

Interview packets

Hiring-manager updates

Define 3 tasks AI cannot do:

Reject candidates

Rank final slates

Infer protected traits

Create a task board:

Backlog → Ready → AI Drafting → Human Review → Needs Fix → Approved → Archived

Assign an owner and a reviewer

Workflow

Write a one-page role brief

Create reusable task templates

Add 5 real tasks

Run AI against defined prompts

Save outputs as review packets

Score:

Accuracy

Usefulness

Evidence quality

Rework

Approve only after human review

Update prompts based on failure patterns

Review weekly

Prompt:

You are supporting recruiting operations for one role.

Rules:

- Do not make hiring decisions

- Do not rank candidates without explicit criteria

- Cite evidence for every claim

- Flag uncertainty

- Produce a reviewable output

Role brief:

[paste role brief]

Task type:

[market research / sourcing query ideas / outreach draft / interview packet / hiring-manager update]

Inputs:

[paste sanitized inputs]

Output format:

[define exact format]

Common Mistakes

Letting AI infer criteria from vague job descriptions

Asking for recommendations instead of evidence

Sending AI outreach without validation

Changing tools without re-evaluation

What Good Looks Like

Every task has an owner, inputs, and status

Outputs cite evidence and uncertainty

Recruiters can explain what AI did

Hiring managers get cleaner artifacts, not more noise

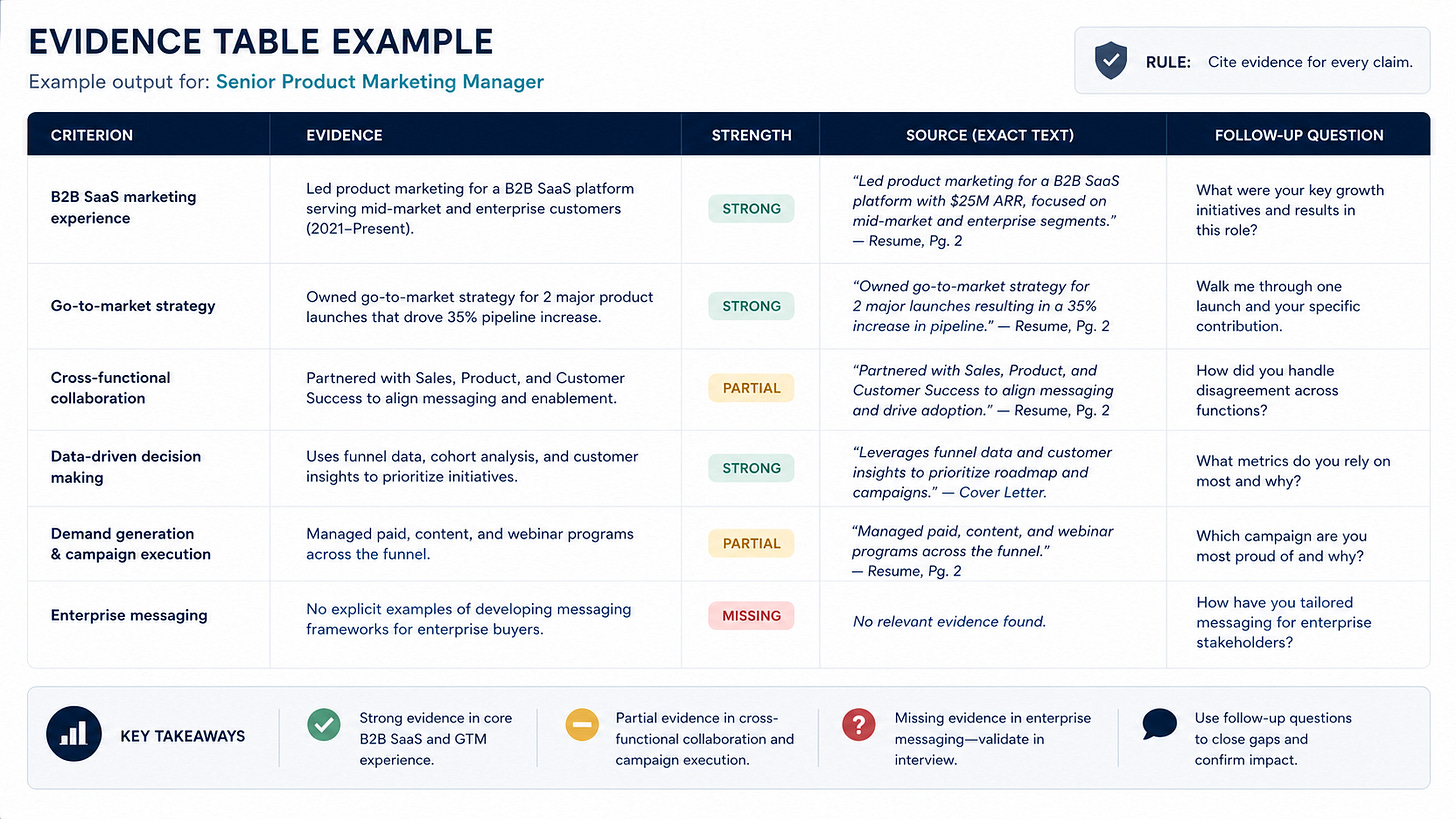

Prompt Chain: Evidence-Based Candidate Packet

This is how you force AI output to stay reviewable.

Use this to convert sanitized candidate materials into a recruiter-reviewed packet tied to role criteria.

System prompt:

You are a recruiting operations assistant. You organize candidate evidence for human review.

You must not decide whether to advance or reject a candidate. You must not infer protected traits, personality, culture fit, age, health, nationality, race, gender, disability, or family status. You must cite evidence from the provided materials and flag missing information clearly.

Prompt 1:

Extract evidence.

Role criteria:

[paste must-have and nice-to-have criteria]

Candidate materials:

[paste sanitized resume, notes, portfolio excerpts, or transcript excerpts]

Return a table with:

- Criterion

- Evidence found

- Evidence strength: Strong / Partial / Missing

- Source text

- Follow-up question for human interviewer

Prompt 2:

Using only the evidence table, list:

- Unsupported claims that should be removed

- Criteria with missing evidence

- Questions a recruiter should ask before presenting the candidate

- Any places where the input could bias the reviewer

Prompt 3:

Create a concise candidate packet for human review:

- Candidate snapshot

- Evidence by must-have criterion

- Open questions

- Suggested interview focus areas

- Do not include a recommendation to advance or reject

When this breaks: the criteria are vague, the resume is too thin, the model invents judgment language, or the team uses the packet as a decision instead of a review artifact.

Fast Wins

Build a simple AI workflow inventory

Turn one update into a reusable template

Create a 20-case evaluation sheet

Add “Reviewed by [owner]” to outputs

Publish a “do not use AI for” list

Strategic Experiments

Recruiting Agent Workbench

Hypothesis:

Task-based AI reduces admin time without lowering quality.

Test:

Run 20 AI-supported tasks with review.

Measure:

Time saved, rework, usefulness, errors, confidence.

Evaluation Set

Hypothesis:

A fixed test set catches regressions.

Test:

Compare prompts and models on the same cases.

Measure:

Accuracy, missing data, tone, pass rate.

AI Artifact Packaging Sprint

Hypothesis:

File generation beats sourcing automation for time savings.

Test:

Convert reporting and docs into AI templates.

Measure:

Prep time, rework, adoption.

The Shift Is Happening

Recruiting is moving from intuition and effort to systems and evidence.

The teams that win won’t be the ones using the most AI tools. They’ll be the ones who operationalize them.

Work queues. Review loops. Evaluation sets. Clear ownership.

That’s the difference between “AI-assisted recruiting” and a recruiting function that actually scales.

What You’ll Get Here

If this resonates, this is what I’ll keep breaking down:

Practical AI workflows you can implement

Real recruiting systems

Playbooks that improve speed, quality, and trust

Clear guidance on what to ignore

No hype. No generic advice. Just what works.

Subscribe if You’re Building

If you’re building, fixing, or scaling a recruiting function, this is for you.

Subscribe to get the next issue.